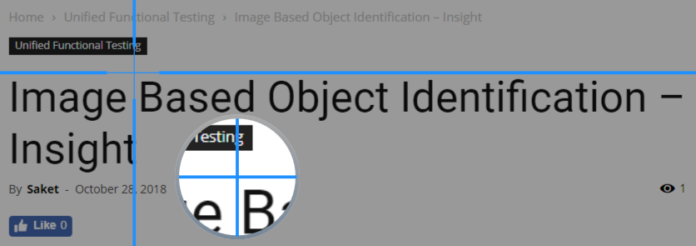

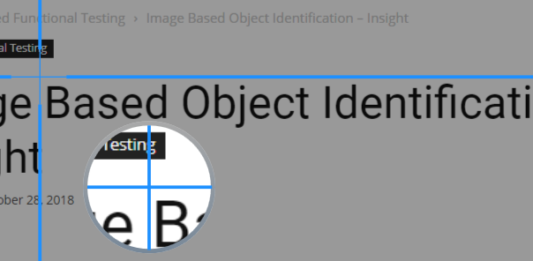

Image-based recognition was first introduced in UFT (QTP) version 11.5, which enables the recognition of objects in Application Under Test (AUT) using the “image” of the test objects instead of the properties of the object. This is also commonly known as Insight object identification as while working UFT needs to be in “Insight” mode. This can be useful to test controls from an application that UFT does not support or with some specific tests or required action for your tests. While experiencing Insight earlier, I came across this youtube video of a memory game which is a good example of its capabilities.

https://automated-360.com/unified-functional-testing/the-power-of-insight-object/

Insight is an image-based object identification ability, to recognize objects in the application based on what they look like instead of using properties that are part of the design. UFT stores an image of the object with insight test objects and uses this image as the main description property to identify the object in the application.

Check out the latest version of UFT

In this Post

Insight Object Identification

In Insight mode, UFT stores the image of an object along with its ordinal identifiers in the object repository. These objects are called Insight Objects or Insight Test Objects. UFT stores ordinal identifier only if there are two objects with the same look and feel exists, i.e. very similar images. These images become the main description property for UFT to identify objects.

You can also use Visual Relation identification for insight objects. It helps to improve object identification and avoid using ordinal identifiers. You also have the flexibility to identify objects even if it is not matching the same. You just need to add the “similarity” property to the test object description.

Adding Insight Objects

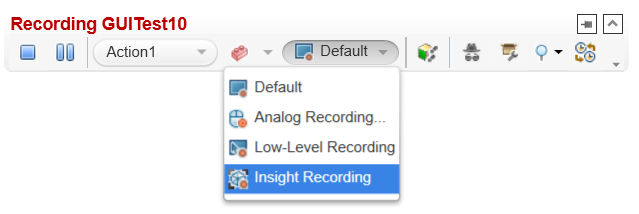

UFT provides two ways of adding insight objects either while recording or adding insight objects directly into the Object Repository (OR). While recording, you need to choose the recording mode as “Insight Recording”. In this mode, UFT records objects as insight objects and store images for all the objects it cannot identify using appropriate add-ins. It captures the know identifiable objects in the usual way.

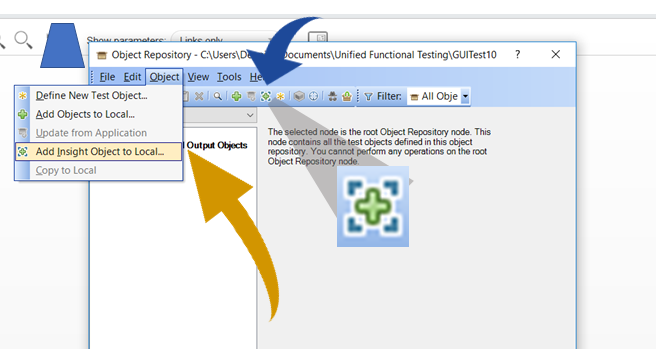

The other option is to directly add objects into OR using the “Add Insight Objects to Object Repository” ![]() button on the toolbar. You can also use the menu option “Object > Add Insight Object to local”

button on the toolbar. You can also use the menu option “Object > Add Insight Object to local”

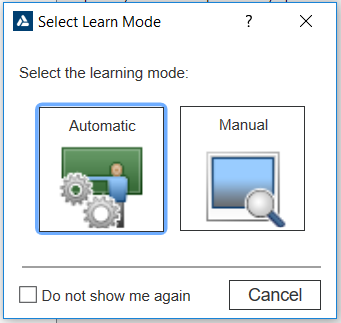

While Adding Insight objects to OR, UFT provides two modes of learning objects – Manual and Automatic. The basic difference between both is just that how we define the object using boundaries. In Automatic mode, UFT defines boundaries automatically while in Manual mode, you need to define boundaries specifically.

Adding Insight Objects in Automatic Mode

To use the automatic mode, you need to select the “Automatic” Mode and then point and click on the object you need to capture. UFT Captures and stores the image of the clicked object. There could be a case when UFT capture an extra or unnecessary part of maybe a lesser part of the required object which captures the image of the object. UFT provides the option to exclude areas from the captured image and save them in OR.

Adding Insight Objects in Manual Mode

Manual Mode helps define the boundaries of the object manually by dragging the mouse and specifying the object area. Select “Manual” Learning Mode in the “Select Learn Mode” dialogue box.

Object Configuration

UFT can use an ordinal identifier to create a unique description for the object.

Other aspects of object configuration, such as mandatory and assistive properties, and smart identification, are not relevant for Insight test objects.

Visual Relation Identifiers

You can also use visual relation identifiers to improve object identification

Similarity

Add the similarity description property to the test object description.

This property is a percentage that specifies how similar control in the application has to be to the test object image for it to be considered a match.

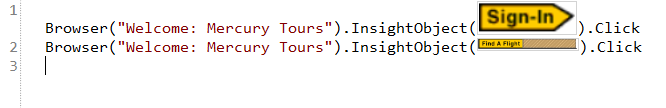

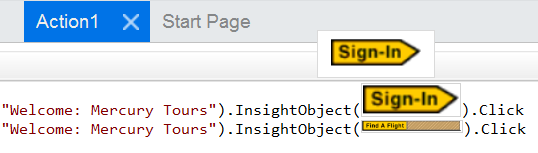

Scripting with Insight Object

Scripting with Insight object is easy and the same as your script with any other object. It’s just that in place of the test object name, you need to use the test object image. Action on the object can be different based on the type of object.

When you hover over the cursor on the object in your code, you will be able to see the enlarged image of the object. By clicking on the image, you can go to the respective object in the Object repository.

Settings/Options to work with Insight

To define options that customize how UFT handles insight object when creating test object and during the run session, UFT provides certain options settings.

Goto Tools> Options

Select the “GUI Testing” tab

Select the node “Insight”

When Recording a test Object

Save the clicked coordinates as the test object’s ClickPoint – Check this option if you want to save the co-ordinates as click points for objects

When Running a Step

Display mouse operations – Check this option if you want to show the mouse click/drag operations on Insight Objects

Editing Steps

Show test object image in steps – Check this option if you want to display Insight test object images in steps in the Editor. If cleared, UFT displays the test object names instead.

Display select learn mode dialogue box – Check this option if you want UFT to display the ‘Select Learn Mode’ dialogue box. If this option is cleared, UFT no longer displays this dialogue box on clicking

When Taking Snapshots

Limit test object image margin in a snapshot – check this option to limit the snapshot size when adding a new Insight test object. The margin size can be specified in the Maximum pixels around the image box. If cleared, UFT includes the whole screen in the snapshot.

Maximum Pixels around the image – instructs UFT to include extra areas in the snapshot, based on the pixels mentioned.

Maximum Numbers of snapshots to save when recording a test – instructs UFT to limit the number of images it saves while recording the test in Insight mode.